Imagine unleashing AI models that scream verifiability without spilling a drop of private data. That's the brutal reality Inference Labs delivers with the JSTprove framework, a zkML powerhouse optimizing zkML proof generation through custom Circom circuits. Developers, this isn't just another tool; it's your ticket to crush black-box AI doubts in decentralized networks like Bittensor. Forget crypto PhDs; JSTprove's CLI rips through complexity, handing you proofs that verify inference lightning-fast.

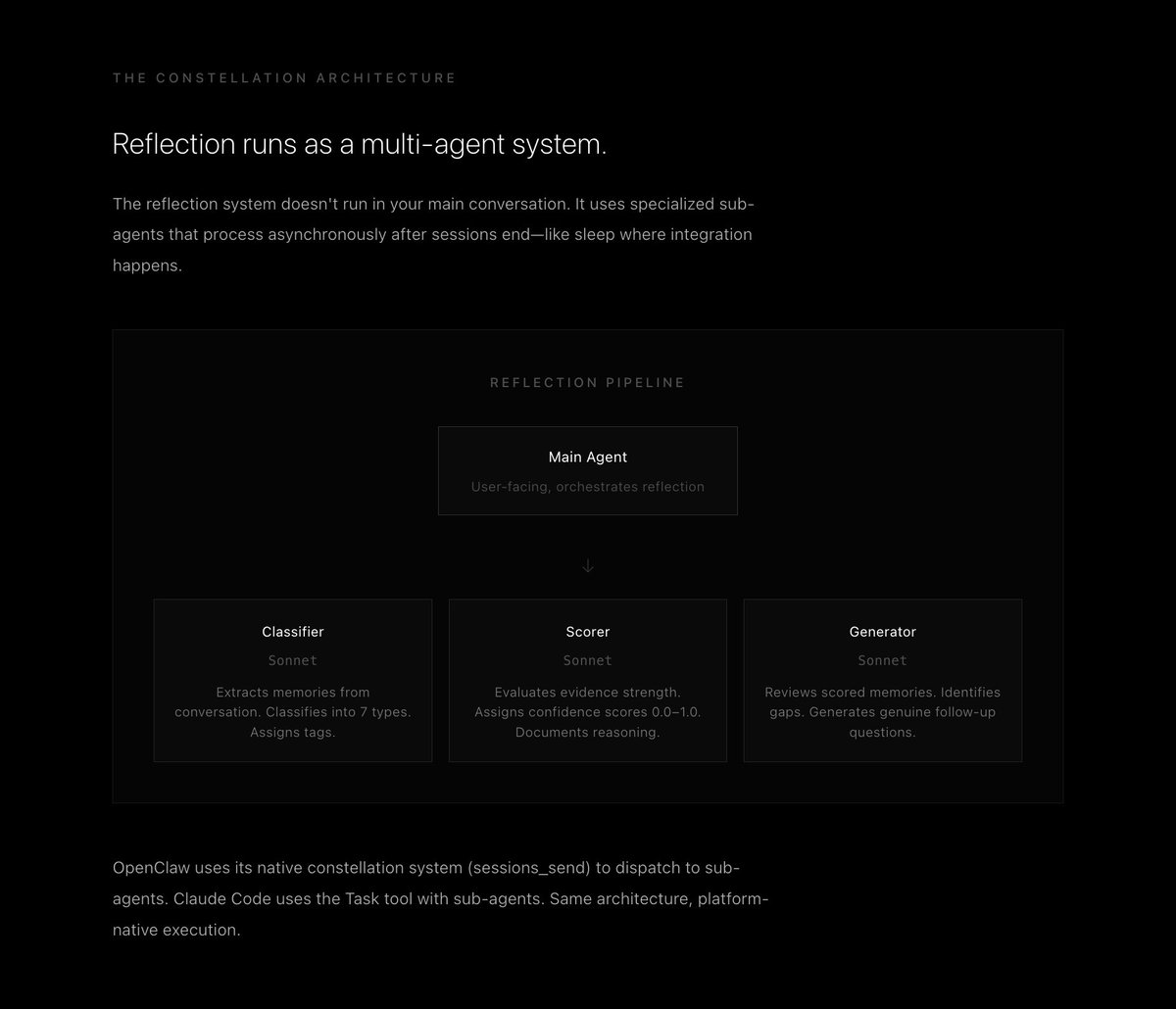

Inference Labs, fresh off a $6.3M funding haul, isn't playing small. Their Inference Labs zkML arsenal targets the trustless future where AI outputs get cryptographically locked down. JSTprove swallows ONNX models whole, quantizes them aggressively, compiles circuits via ECC for Circom circuits zkML magic, generates witnesses, and spits out proofs using Polyhedra Network's Expander backend. Every step? Explicit, reproducible, debuggable. You dictate the path, snag artifacts like quantized models and circuits, and verify your AI behaves exactly as trained. No smoke, no mirrors, pure dominance.

JSTprove's Pipeline: From Model to Bulletproof Proof

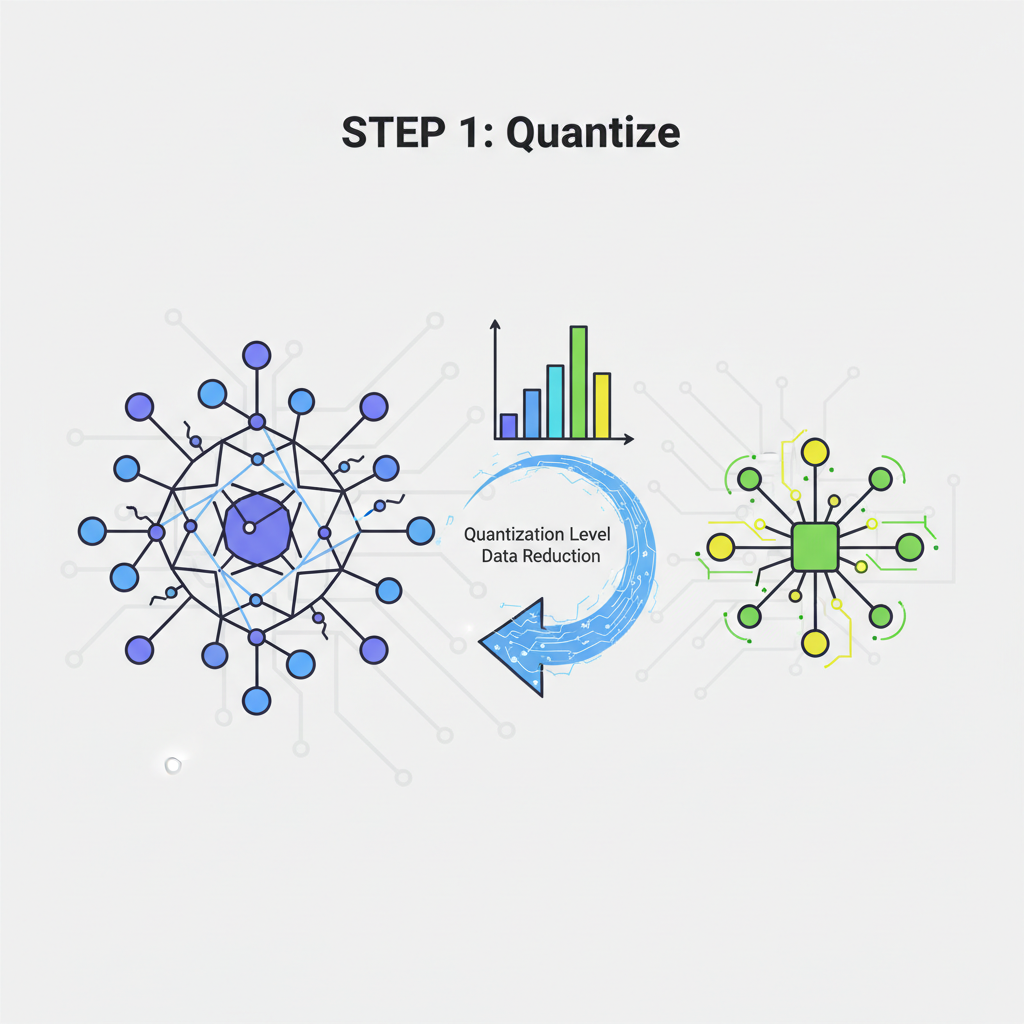

Charge into zkML with JSTprove's end-to-end fury. Start with your ONNX beast, watch it quantize to slash compute demands while preserving accuracy. Then, circuit construction hits hard: custom Circom circuits tailored for ML ops like convolutions, ReLUs, max pooling, and dense layers. This isn't generic; it's precision-engineered for speed and size. Witness generation feeds your inputs without leaks, and Expander proves it all in a snap. CLI simplicity? One command deploys the beast: bittensor zkML integration made effortless.

These results show JSTprove's readiness for practical zkML applications, making it one of the most developer-accessible frameworks in this space.

Transparency fuels the fire. JSTprove exposes every artifact, letting you dissect circuits or tweak witnesses. Built for ML engineers, not cryptographers, it hides the ZK grind behind intuitive commands. Powers Omron subnet's 283M and proofs on Bittensor, validating real-time AI across decentralized nodes. Scale? World's largest zkML proving cluster, incentivized by Bittensor miners since April 2024.

Custom Circom Circuits: Your Optimization Weapon

Here’s where JSTprove flexes hardest: custom Circom circuits zkML optimization. Traditional zkML chokes on bloated circuits; JSTprove surgically crafts them via ECC compilation, minimizing constraints for screaming-fast proofs. Convolution layers? Optimized to shred memory. ReLUs? Linearized for proof efficiency. Fully connected? Packed tight. Pair with Expander's backend, and you're proving heavy inference in minutes, not days. This edge? Critical for Bittensor zkML integration, where subnets demand real-time verifiability.

Real-World Deployment: Bittensor's zkML Battlefield

Inference Labs doesn't theorize; they deploy. Omron subnet dominates as Bittensor's zkML proving juggernaut, churning proofs across nodes. DSperse decentralizes inference, nodes competing to verify AI without trusting blindly. $6.3M fuels this DeAI crusade, partnering Lagrange's DeepProve for cryptographically sound models. Black-box AI? Obliterated. Developers deploy verifiable agents on-chain, off-chain, anywhere.

Grab JSTprove from GitHub and dominate zkML proof generation today. Notebooks unpack ZKP math, proof systems, and optimizations, arming you to tweak and conquer. Inference Labs' JSTprove framework isn't locked in ivory towers; it's open-source fury for builders hungry for Inference Labs zkML supremacy.

Hands-On Domination: Prove Your First Model Now

Enough talk, seize control. JSTprove's CLI turns ML engineers into zk warriors overnight. Feed it an ONNX model, specify quantization bits, pick your ops, and unleash the pipeline. Custom Circom circuits emerge optimized, witnesses compute privately, Expander proofs verify instantly. Debug? Artifacts at your fingertips. Deploy to Bittensor subnets, watch nodes battle for validation. This is Bittensor zkML integration on steroids, powering DSperse's targeted proofs where full-model proving wastes cycles.

DSperse elevates this: modularize your model. Prove just the safety gate on user prompts or anomaly detector in trading signals. Backend agnostic, swap Expander for Groth16 or Plonk per layer. Proof times plummet 5x, memory halved. Real-time AI on decentralized nets? Locked in. Inference Labs' Omron evolution cranks 283M and proofs, Bittensor's proving beast incentivizing miners to fuel the cluster.

Partnership Power-Ups: Lagrange DeepProve Fusion

Inference Labs charges alliances. Lagrange's DeepProve slots into the ecosystem, cryptographically nailing model fidelity sans parameter leaks. Deploy AI agents that scream 'verified' on-chain, shatter black-box myths. This duo hammers DeAI standards: verifiable outputs for off-chain compute, trustless agents in Web3. $6.3M war chest accelerates GPU acceleration and mega-model support, future-proofing your edge.

Inference Labs is excited to announce the public release of JSTprove, our framework for Zero-Knowledge Machine Learning (zkML).

arXiv drops the blueprint: JSTprove orchestrates quantization to proof, CLI simplicity masking crypto depths. Semantic Scholar echoes: end-to-end pipeline exposing guts for tinkerers. Our Crypto Talk hails it verifiable AI for devs, no data spills. Subnet Alpha spotlights DSperse's node swarm verifying inferences decentralized.

Scalability roars. World's largest zkML cluster via Bittensor miners, real-time proofs across subnets. Taha Murtaza nails it: JSTprove bridges AI speed with ZK trust, Omron's 283M proofs proving the point. GitHub notebooks? Goldmines for protocol hacks, ZKP dissections, optimization strikes.

Future Assault: Unbreakable AI Unleashed

JSTprove eyes larger architectures, GPU blasts for hyperscale proofs. DSperse modularity scales to trillion-param beasts, proving slivers surgically. Partnerships multiply: more backends, deeper Bittensor ties, Web3 inference explosions. Developers, this is your arena. Forge verifiable derivatives pricing like my options plays, leak-proof edges in high-stakes trades. ZKML crushes opacity, empowers decentralized brains that think privately, prove publicly.

Charge in. Clone JSTprove, spin up a proof, deploy to DSperse nets. Bittensor awaits your verifiable AI onslaught. Inference Labs hands you the weapons; now dominate the trustless frontier. Your models, unbreakable. Your edge, unmatched. zkML isn't coming, it's here, ripping through barriers. Get building, crush the competition.

No comments yet. Be the first to share your thoughts!