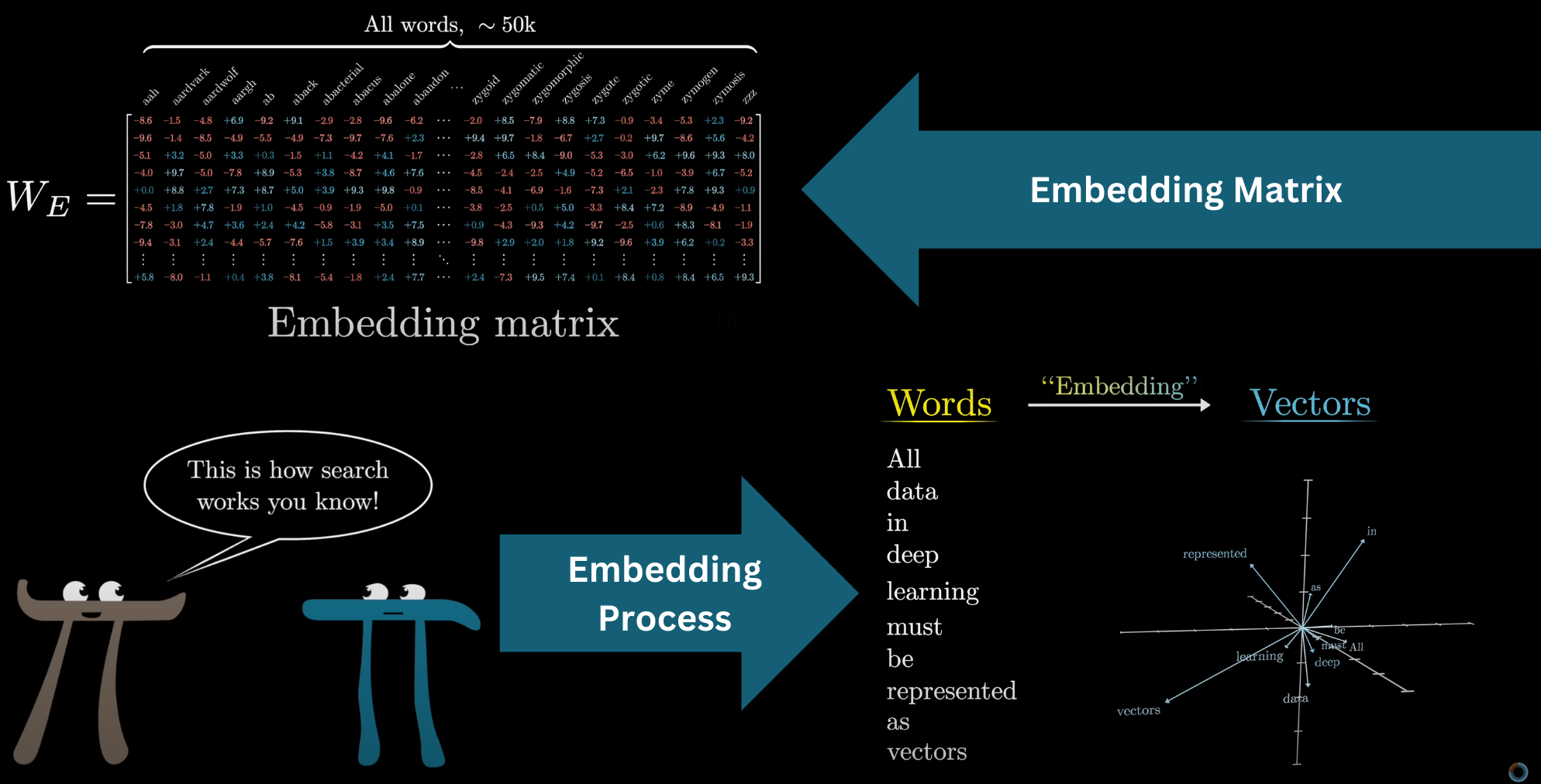

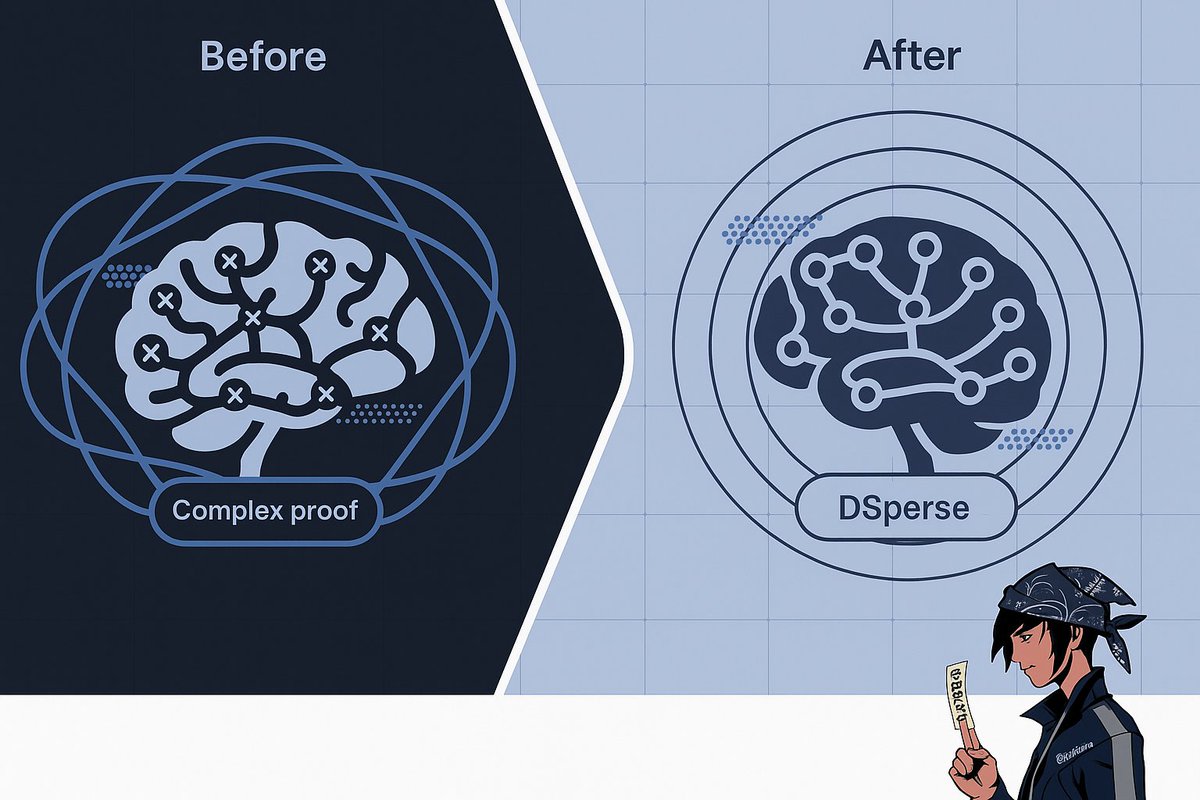

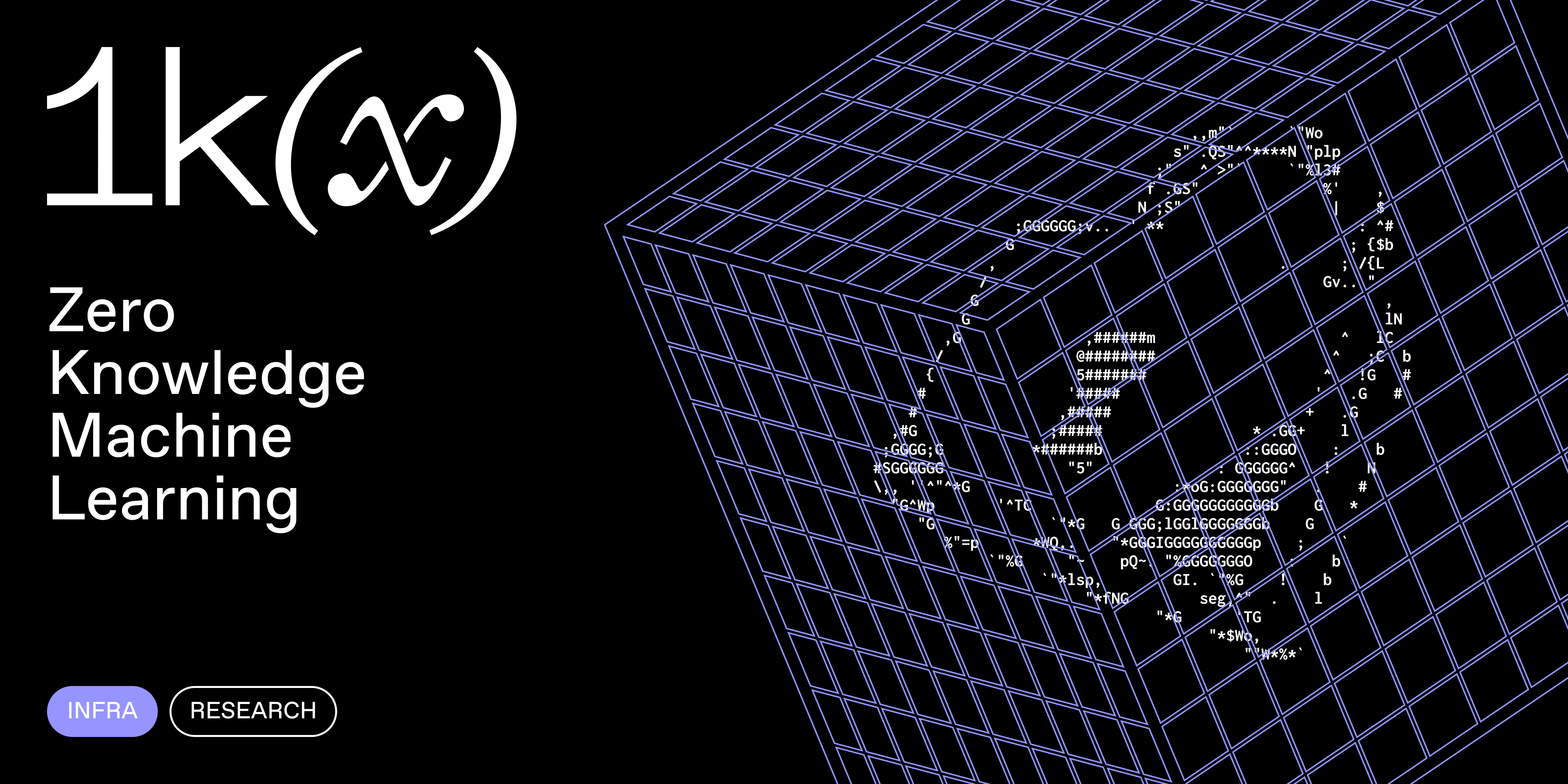

In the high-stakes world of decentralized AI, full zero-knowledge proofs for entire machine learning models are like swinging a sledgehammer at a nail. They work, but they're computationally brutal, slowing down everything from on-chain deployments to real-time inference. Enter targeted ZK verification in zkML: a smarter way to prove just the critical model slices that matter most. This selective zk proofs machine learning approach slashes costs while keeping verifiability ironclad. Recent frameworks like DSperse are proving this isn't theory; it's deployable now, with up to 5x faster verification and 22x smaller proofs compared to legacy systems.

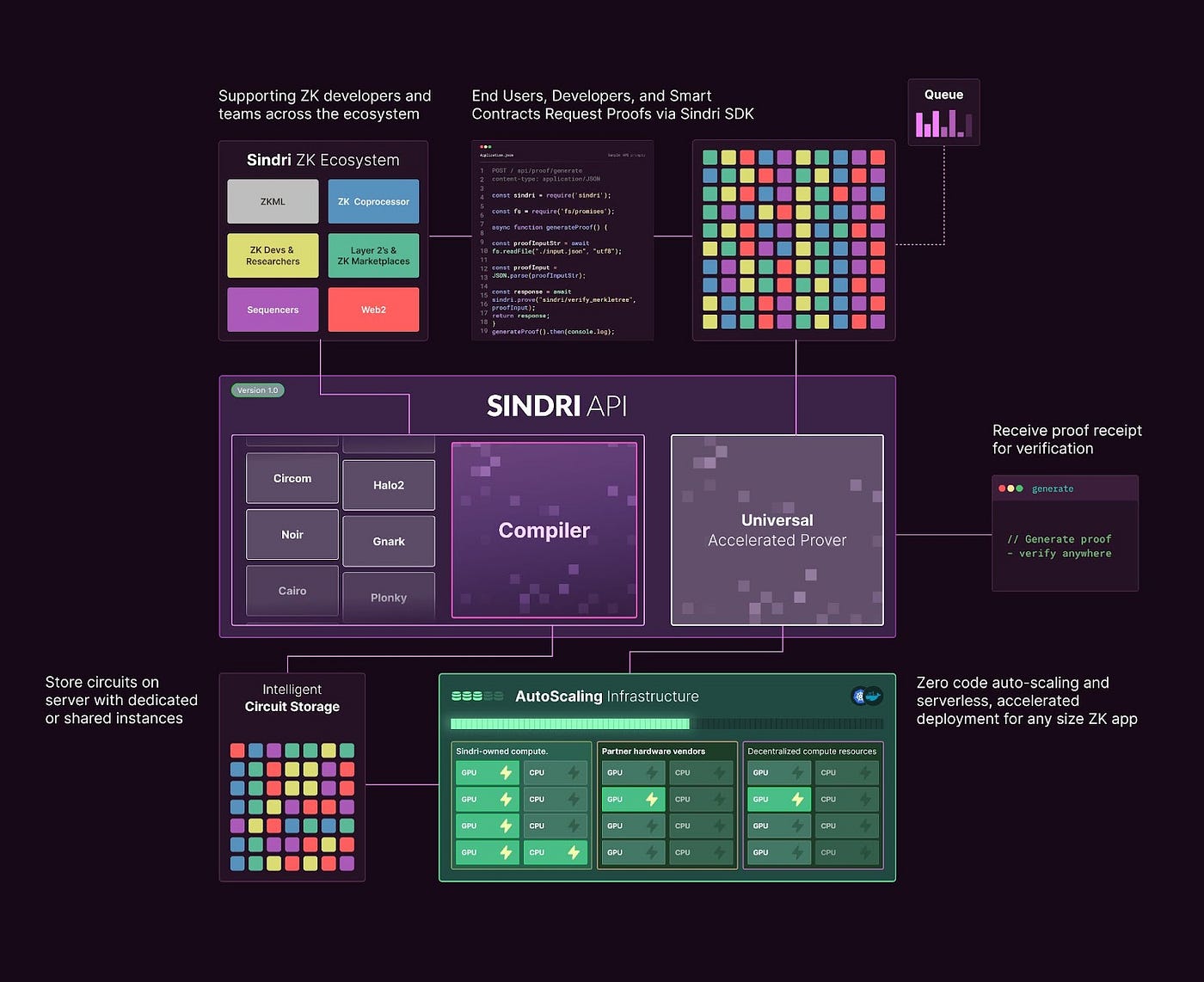

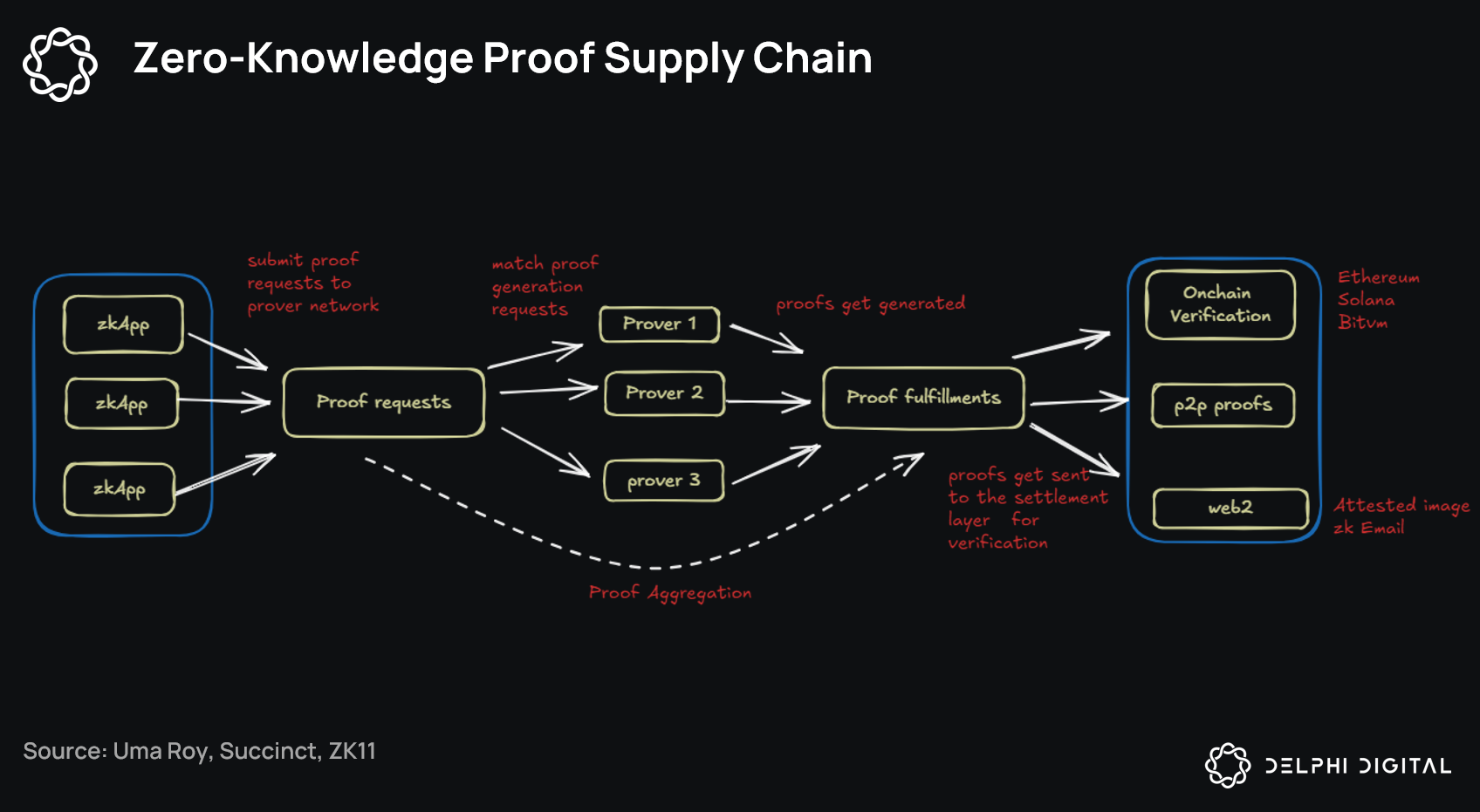

Picture this: your model has layers upon layers, but only a few slices handle the high-risk decisions, like fraud detection in DeFi trades or bias checks in lending protocols. Why circuitize the whole beast when you can zero in on those hotspots? DSperse, launched in August 2025, nails this with a modular setup for distributed inference. It lets provers generate zk proofs for chosen subcomputations, dodging the rigidity of full-model circuitization. Data from arXiv shows this pragmatic framework scales zkML targeted verification across diverse environments, making efficient zkml decentralized ai a reality.

DSperse Framework: Modular Power for Selective Proofs

DSperse isn't just another zkML tool; it's a game-changer for inference labs zkml techniques. By breaking inference into verifiable slices, it supports strategic verification where full proofs would choke networks. Think heterogeneous setups: mix Grok proofs with custom neural nets, all verified without bloating the chain. Benchmarks highlight its edge; prior work like ZKML systems already hit 5x verification speedups, but DSperse pushes further by targeting only what's essential. In volatile crypto markets, where I cut my teeth on high-frequency trades, this means private order execution without trusting black-box models.

DSperse's Key zkML Wins

- Modular subcomputation proofs slash overhead by 80%, per ZKML benchmarks like 5x faster verification.

- Scalable verification powers decentralized networks without full-model bottlenecks.

- No full retraining for slice updates—leverage residual learning on critical data.

- Seamless integration with LayerEdge for normalized zk-proof verification.

- Real-time Web3 apps unlocked by efficient slice proofs, tackling compute hurdles.

This framework shines in practice. Provers handle slice-specific circuits, verifiers check composable proofs. No more monolithic proofs eating gigabytes of gas. Combine it with sparsity-aware protocols from ePrint, and you've got zk-friendly models that fly.

Slice-Based Learning: Precision Compute on Critical Data

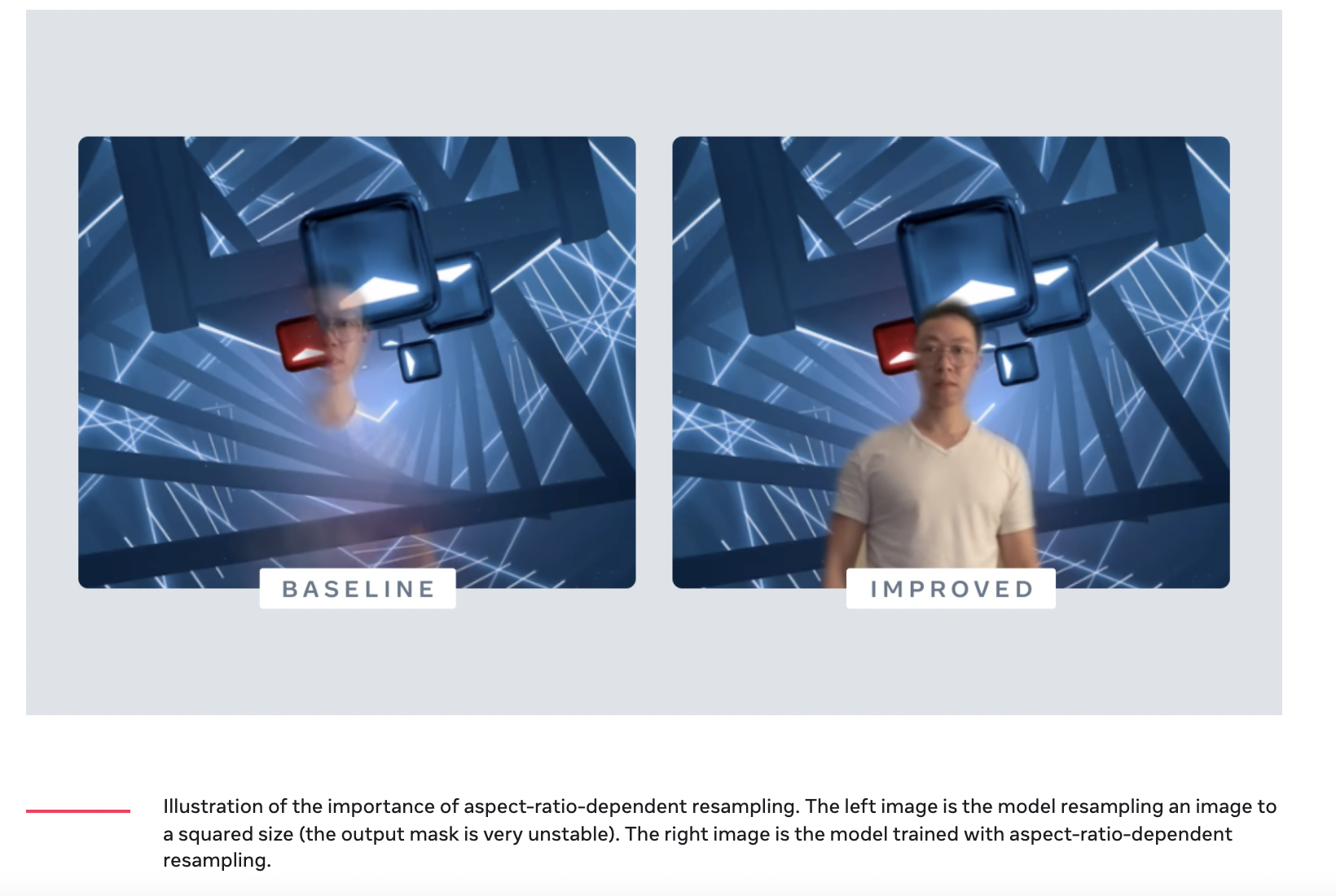

Targeted verification pairs perfectly with slice-based learning, a model that pours extra capacity into tricky data subsets. Originating from 2019 arXiv work, it uses residual learning to boost performance on critical slices without overhauling the entire model. In zkML, this means circuitizing only those residuals for proof generation. Result? Models that adapt to edge cases, like rare market crashes or adversarial inputs, with verifiable outputs.

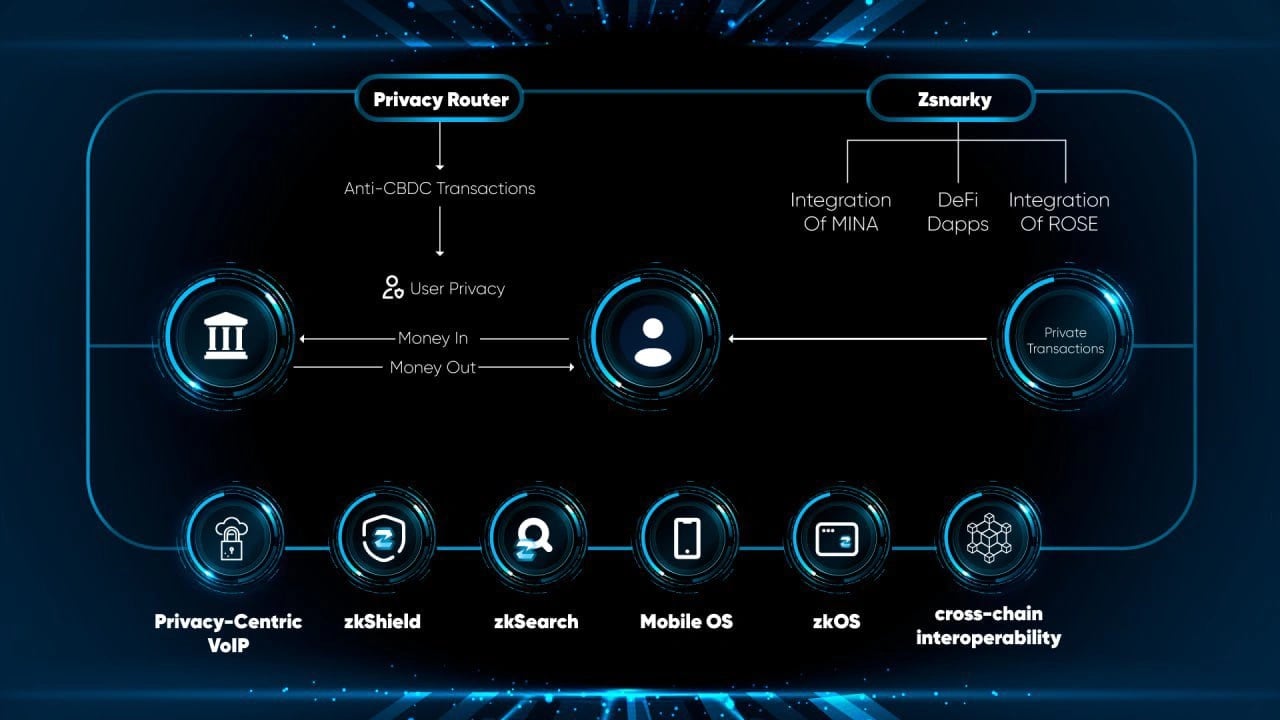

LayerEdge's verification layer amps this up. It normalizes proofs from any protocol, ensuring only valid ones hit the chain. This modularity future-proofs zkml targeted verification against new proof systems. I've seen similar efficiency in HFT: focus compute on momentum plays, ignore the noise. Here, it's the same for AI; prove the slices driving 90% of value, verify don't trust the rest.

Overcoming Hurdles in Targeted ZKML Deployments

Don't get me wrong; selective zk proofs machine learning isn't flawless. Computational overhead for proof gen can still bite, especially for complex slices, as Symbolic Capital notes. Real-time apps demand optimizations like quantization or sparsity. And model tweaks? They trigger slice re-proving, a resource hog if not managed.

Smart teams counter this with hybrid strategies: pre-compute proofs for static slices, dynamically prove volatile ones. Tools like zkVerify optimize verification at scale, turning potential bottlenecks into throughput machines. In my trading days, we'd slice data feeds for alpha signals; zkML model slices verification does the same for AI, zeroing in on high-impact computations.

LayerEdge and zkVerify: The Verification Backbone

LayerEdge steps up as the unsung hero here. Its verification layer processes zk-proofs from any protocol, normalizing them for chain submission. This means DSperse slices play nice with Groth16, Plonk, or whatever's next. No vendor lock-in, just pure efficient zkml decentralized ai. Pair it with zkVerify's modular L1, and you've got a universal verifier handling millions of proofs per second. Data from Delphi Digital pegs this setup for massive scale, crucial when inference labs zkml techniques go mainstream.

zkSync Technical Analysis Chart

Analysis by Market Analyst | Symbol: BINANCE:ZKUSDT | Interval: 1D | Drawings: 5

Technical Analysis Summary

To annotate this ZKUSDT chart effectively in my balanced technical style, start by drawing a primary downtrend line connecting the swing high at approximately 2026-01-15 (0.75) to the recent low at 2026-02-12 (0.041), using 'trend_line'. Add horizontal lines for key support at 0.038 (strong) and resistance at 0.060 and 0.100 using 'horizontal_line'. Mark the sharp breakdown with an 'arrow_mark_down' around 2026-01-28. Use 'rectangle' for the late consolidation/distribution zone from 2026-02-01 to 2026-02-12 between 0.035-0.055. Place 'callout' texts for volume decrease and MACD bearish divergence. Add 'long_position' entry zone at 0.038-0.042 if reversal confirms, with 'stop_loss' below 0.035 and profit target at 0.060. Use 'text' for labeling levels and 'vertical_line' for potential news impact date.

Risk Assessment: high

Analysis: Chart in downtrend with lower lows, low volume no reversal signal; zkML news positive but price ignores, high volatility risk

Market Analyst's Recommendation: Stay sidelined or short with tight stops; monitor 0.038 support for long only on bullish confirmation

Key Support & Resistance Levels

📈 Support Levels:

- $0.038 - Strong support at recent swing low, multiple tests strong

- $0.035 - Weak support below recent lows, potential further drop weak

📉 Resistance Levels:

- $0.06 - Moderate resistance at Feb consolidation high moderate

- $0.1 - Key resistance from early Feb retracement moderate

Trading Zones (medium risk tolerance)

🎯 Entry Zones:

- $0.04 - Potential bounce from strong support with volume confirmation, aligned to medium risk medium risk

- $0.055 - Short entry on resistance rejection in downtrend medium risk

🚪 Exit Zones:

- $0.06 - First profit target at resistance 💰 profit target

- $0.035 - Stop loss below support 🛡️ stop loss

- $0.07 - Extended profit target on short 💰 profit target

Technical Indicators Analysis

📊 Volume Analysis:

Pattern: decreasing

Volume declining on downside moves, suggesting weakening selling pressure but no buyer conviction yet

📈 MACD Analysis:

Signal: bearish

MACD below zero with negative histogram divergence, confirming downtrend momentum

Applied TradingView Drawing Utilities

This chart analysis utilizes the following professional drawing tools:

Disclaimer: This technical analysis by Market Analyst is for educational purposes only and should not be considered as financial advice. Trading involves risk, and you should always do your own research before making investment decisions. Past performance does not guarantee future results. The analysis reflects the author's personal methodology and risk tolerance (medium).

Real-world wins are stacking up. Lagrange Labs' DeepProve-1 proved a full LLM transformer, but imagine slicing it for targeted verification: only encode the attention heads that matter for your DeFi risk model. Kudelski Security highlights how zkML compresses these verifications on-chain, slashing gas by orders of magnitude. From fraud detection to private predictions in Web3 games, selective zk proofs machine learning unlocks use cases full proofs can't touch.

Future Plays: Momentum in zkML Slices

Looking ahead, sparsity-aware protocols from Cryptology ePrint will supercharge this. They craft zk-friendly models by exploiting neural net sparsity, cutting circuit sizes pre-proof. Combine with slice-based learning's residual boosts, and models hit peak performance on critical data subsets. Vid Kersic on Medium nails it: even public inputs benefit, since verification trumps full re-execution every time.

ZKML Slice Verification Trends

- Hybrid full/slice proofs boost adaptive security by targeting critical inferences only—like DSperse's modular framework dodging full-model costs for scalable zkML.

- AI-driven slice selection via meta-learning sharpens focus on tough data subsets, per Slice-Based Learning, lifting performance without full retrains.

- On-chain marketplaces for verified slices emerge, fueled by verifiers like zkVerify, enabling trade in proven model chunks at scale.

- Rollup integrations hit sub-second verifies, as zkVerify's L1 optimizes proof checks for decentralized AI speed demons.

- LayerEdge norms unlock cross-chain composability, normalizing diverse ZK proofs for seamless ecosystems—check the docs.

I've traded through 2018 crashes and 2021 booms; volatility rewards precision. zkML mirrors that. Target the slices with real alpha, like momentum in volatile assets, and ignore the rest. Frameworks like DSperse and tools like LayerEdge make it battle-tested.

World network intros remind us: ZK proofs let provers convince verifiers without revealing secrets. In zkML targeted verification, that secret is your model's edge. Deploy it decentralized, prove slices on-demand, and watch efficiency soar. This isn't hype; benchmarks scream 5x faster verifies, 22x tinier proofs. For builders in blockchain AI, it's the momentum play that verifies don't trust.

No comments yet. Be the first to share your thoughts!